Key Takeaways

- Nvidia's Rubin claim: Warm-water, single-phase direct liquid cooling (DLC) with ~45°C supply temperature; the company says no water chillers are necessary.

- Capex rotation, not deletion: The chiller plant shrinks, but pumps, coolant distribution units (CDUs), and controls grow significantly.

- Cooling doesn't go away: The bottleneck moves into distribution, controls, and commissioning. The real trade is in the plant room, not the silicon.

- Tropical implementation: Requires hybrid approach with 40-50% chiller backup capacity due to high ambient temperature and humidity.

- Fault dynamics change: Liquid cooling fails FASTER but recovers FASTER than traditional systems - requires enhanced monitoring.

Table of Contents

1. The Moment: One Sentence, Billions of Market Cap

CES 2026 wasn't supposed to be about HVAC, but a single line from Jensen Huang sent billions of market cap tumbling. Onstage, he declared that "no water chillers are necessary for data centres" when describing the warm-water DLC in Nvidia's Vera Rubin platform.

Street Reaction - Within Hours

Data centres represent roughly 10-15% of Johnson Controls' sales, ~10% for Trane, and 5% for Carrier. The market's linear assumption that "AI = more chiller demand" was suddenly under threat.

2. What Nvidia Actually Announced

Rubin is not a single chip but a rack-scale platform co-designed across compute, networking, power, and cooling. It combines GPUs, CPUs, and networking into one rack with sixth-generation NVLink interconnects and an MGX architecture for serviceability — part of the broader shift toward AI factory infrastructure and GPU-dense computing that is reshaping facility design.

The Interesting Bit for Facility Operators

Nvidia's technical blog describes warm-water, single-phase direct liquid cooling operating at a supply temperature around 45°C. Liquid captures heat more efficiently than air, enabling higher operating temperatures and reducing fan and chiller energy. The higher supply temperature allows dry-cooler operation with minimal water usage. Rubin also doubles liquid flow rates at the same CDU pressure head to ensure rapid heat removal under sustained extreme workloads.

"The air flow is about the same and the water that goes into it is the same temperature, 45°C... no water chillers are necessary for data centres." — Jensen Huang, CES 2026

The nuance lies in "necessary" — not "no cooling".

3. "No Chillers" ≠ "No Cooling": The Plant-Room Reality

Understanding the Terminology

- Chiller: A mechanical refrigeration plant that cools water to 6-12°C for CRAH/CRAC units. Energy-intensive (0.5-0.7 kW/ton) but decouples heat rejection from ambient temperature.

- Heat rejection: The unavoidable requirement to dump IT heat into the environment. Whether via evaporative towers, dry coolers, or district heating, the heat has to go somewhere.

- Direct Liquid Cooling (DLC): Coolant flows directly to heat-generating components via cold plates, capturing 70-80% of heat at the source.

- CDU (Coolant Distribution Unit): The heart of liquid cooling - pumps, heat exchangers, and controls that manage coolant flow.

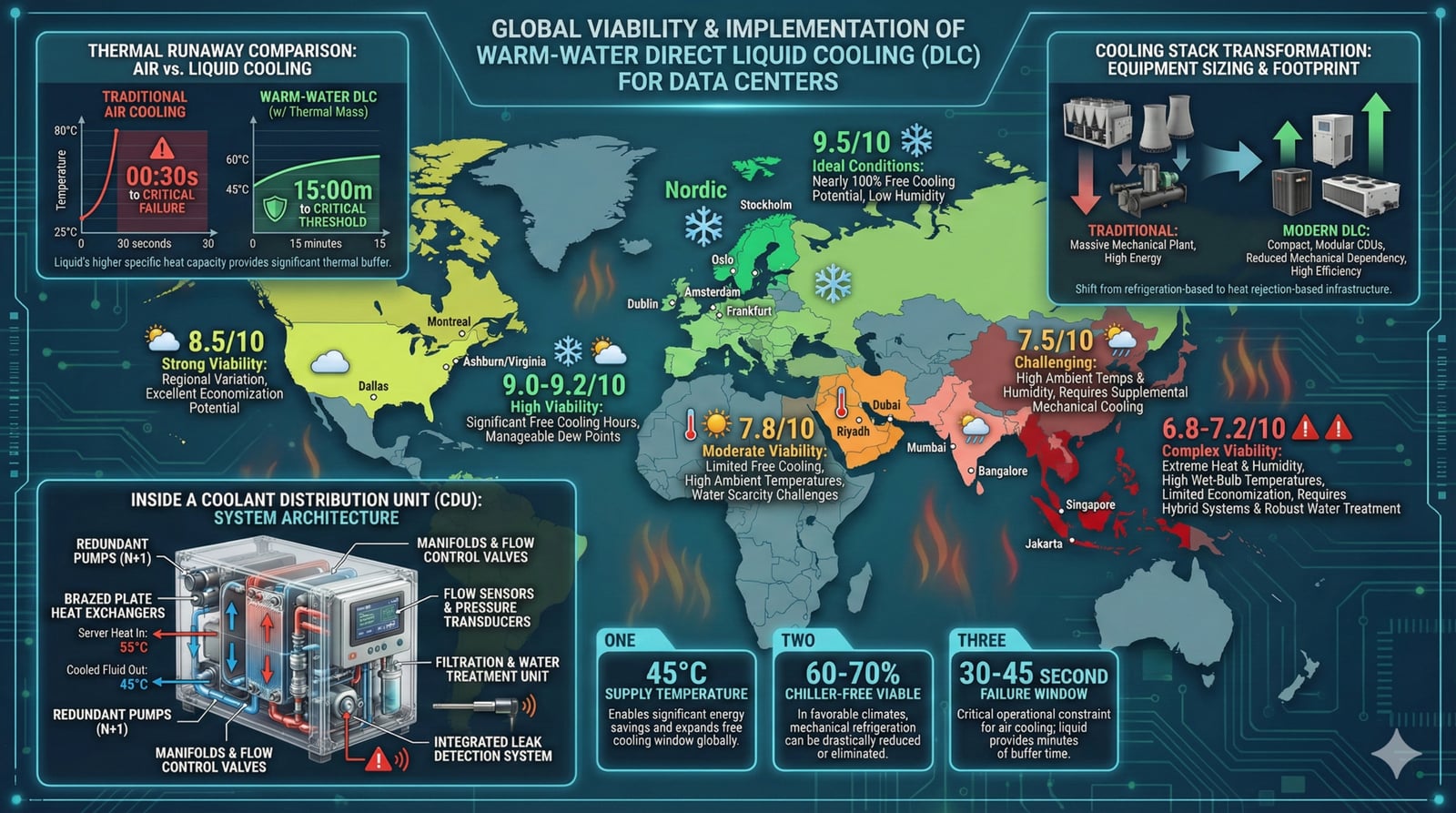

Before vs After: The Cooling Stack Transformation

Traditional Chiller-Based

Warm-Water DLC System

Climate Matters: Where "No Chillers" Actually Works

- Plausible "no chillers": Cold or temperate climates where ambient air rarely exceeds ~40°C (≈45°C supply minus ΔT). Dry coolers can reject heat for most of the year.

- Fewer chiller hours: Mixed or dry climates where warm months necessitate mechanical refrigeration during peak temperatures; hybrid designs use smaller chillers running fewer hours.

- Marketing spin: Hot/humid regions or tight SLA requirements where warm-water loops would drive unacceptable outlet temperatures; mechanical chillers remain primary, but marketing emphasises "reduced chiller load".

Is Chiller-Free Cooling Possible in Tropical Data Centers?

4. Tropical Climate: The Physics Problem

Why Tropical Climate is the Worst-Case Scenario

Dry Cooler Physics: Heat Transfer = U × A × LMTD Where LMTD (Log Mean Temperature Difference) requires: T_fluid_out > T_ambient + Approach_Temperature Temperate Climate (Stockholm): Design Ambient: 25°C (summer peak) Approach Temp: 5°C Fluid Out: 30°C minimum achievable 45°C supply → 15°C margin ✓ WORKS YEAR-ROUND Tropical Climate (Jakarta): Design Ambient: 35°C (frequent) Approach Temp: 5°C Fluid Out: 40°C minimum achievable 45°C supply → 5°C margin ⚠️ MARGINAL Peak Ambient: 38-40°C (heat waves) Fluid Out: 43-45°C achievable 45°C supply → 0-2°C margin ✗ INSUFFICIENT

Adiabatic/Evaporative Cooling Effectiveness

Wet Bulb Depression = Tdry bulb - Twet bulb

Result: Evaporative pre-cooling provides minimal benefit

Nighttime Free Cooling Opportunity

Temperate Climate (Frankfurt)

Tropical Climate (Jakarta)

Annual Free Cooling Hours Comparison

| Location | Free Cooling Hours | % of Year |

|---|---|---|

| Stockholm | 7,500 hrs | |

| Frankfurt | 5,200 hrs | |

| Virginia | 4,000 hrs | |

| Singapore | 400 hrs | |

| Jakarta | 600 hrs |

Source: Publicly available industry data and published standards. For educational and research purposes only.

Southeast Asian Climate Conditions

A warm and humid climate like Singapore or Jakarta is the worst-case scenario for free cooling. The combination of high temperature AND high humidity eliminates both primary free cooling mechanisms.

5. Fault Scenario Analysis: In-Depth

Critical Understanding: Liquid cooling systems fail FASTER but also recover FASTER than traditional air-cooled systems. This changes the entire operational response paradigm and requires enhanced monitoring, faster detection, and more aggressive redundancy.

CDU Failure Modes and Impact Analysis

Primary Pump Failure

Motor burnout, impeller damage, or bearing seizure stops coolant flow to served racks.

Seal/Gasket Failure

Coolant leak at pump seals, heat exchanger gaskets, or quick-disconnect fittings.

VFD/Motor Controller

Variable frequency drive failure, power supply issues, or control board malfunction.

Control System Failure

PLC/BMS communication loss, sensor failure, or control logic malfunction.

6. Interactive: PUE Impact Calculator

Explore how different cooling architectures affect your facility's Power Usage Effectiveness and annual operating costs:

This calculator is provided for educational and estimation purposes only. Results are approximations based on industry benchmarks and publicly available data. They should not be used as the sole basis for investment, procurement, or engineering decisions. Always consult qualified professionals for site-specific analysis.

Algorithm & methodology sources: ASHRAE TC 9.9 thermal guidelines, Uptime Institute 2024 Global Survey, Nvidia Rubin thermal specifications, DCD 2025 cooling benchmarks, 10-year NPV at 8% discount rate, Monte Carlo 10K iterations, 8-region climate analysis.

All calculations are performed entirely in your browser. No data is transmitted to any server. See our Privacy Policy for details.

By using this tool you agree to our Terms. All content on ResistanceZero is independent personal research. This site does not represent any current or former employer.

Direct Liquid Cooling vs Traditional CRAC: ROI Comparison

7. Pros and Cons Analysis

Short-Term (0-3 Years)

PROS

- Immediate OPEX reduction: 30-60% cooling energy savings

- Carbon credits: ESG compliance and reporting benefits

- Water conservation: 30-40% reduction with dry coolers — critical given the growing water stress challenges facing data centers

- Simplified maintenance: Fewer mechanical components

- No refrigerant compliance: Avoid HFC phase-out regulations

CONS

- High initial CAPEX: $500-2,000/kW for liquid cooling

- Technology immaturity: Limited vendor ecosystem

- Staff retraining: New skill requirements for operations

- Compatibility issues: Not all servers support liquid cooling

- Supply chain: Limited component availability

Long-Term (3-10 Years)

PROS

- Future-proof architecture: Ready for 100+ kW/rack AI densities, complementing modern hyperscaler power distribution architectures

- Regulatory compliance: Ahead of environmental regulations

- Heat reuse potential: District heating revenue ($20-50/MWh)

- Market differentiation: Green credentials for premium pricing

- Lower TCO: 30-40% reduction over 10-year lifecycle

CONS

- Stranded assets: Existing chiller infrastructure devaluation

- Technology lock-in: Vendor dependency risks

- Climate change impact: Rising ambient temperatures

- Fluid management: Dielectric fluid lifecycle and disposal

- Unknown failure modes: Emerging issues from new technology

8. Regional Implementation Verdict

Implementation feasibility varies dramatically by geography. Select a region to see the detailed analysis:

Implementation Verdict

Requires hybrid approach with 40-50% chiller backup capacity. High humidity limits evaporative cooling. Design for worst-case ambient of 38-40°C. Enhanced monitoring and 2N redundancy essential.

Select Region

9. Conclusion

The market panic-sold HVAC stocks after one line about "no chillers". Rubin doesn't kill cooling; it reprices the cooling stack. Heat still flows; investors and operators just need to follow it into pumps, CDUs, and control loops.

"The edge is knowing where the new bottleneck sits — inside the plant room, not on a silicon slide."

For operations professionals managing critical infrastructure:

- Understand the physics: "No chillers" works in cool climates. In tropics, it's "fewer chiller hours" at best.

- Plan for hybrid: Target 60-70% chiller-free operation with robust backup for extreme conditions.

- Invest in monitoring: Liquid cooling's faster thermal dynamics demand faster detection and response.

- Train your teams: New technology introduces new failure modes requiring new skills and procedures.

- Design for climate change: Build margin for 2050 conditions, not just today's averages.

All content on ResistanceZero is independent personal research derived from publicly available sources. This site does not represent any current or former employer. Terms & Disclaimer

References & Further Reading

- Data Center Dynamics - Chilling Out in 2025

- IEEE Spectrum - Cool(ing) Ideas for Tropical Data Centers

- NUS - Sustainable Tropical Data Centre Testbed (STDCT)

- Microsoft - Zero Water Cooling Data Centers

- Vertiv - N+1 Redundancy for Data Center Cooling

- Original analysis inspired by @elongated_musk on Medium